🤖 3 Short Essays About Artificial Intelligence | Ethics, ChatGPT, AI vs The Law

+ A funny bonus

In today’s issue:

The BIGGEST ethical and societal questions around AI 🧵

Why ChatGPT is not as revolutionary as you think

Artificial Intelligence and the Law

But first, an announcement:

Hi all!

After trying out new content last week, I realized two things:

I was more engaged while writing about AI than about the previous topics, and the last issue was too long. The previous post was extensive. We covered digital humans in-depth, but it was too much.

So now, I will try a different format.

I will send out a series of atomic essays. 250~400 words each, in every newsletter. Let’s try that out!

Brandon

The BIGGEST Ethical and Societal Questions Around AI 🧵

The rise of AI is comparable to the internet coming online

There are ethical and societal questions around the rise of AI that need to be addressed. Some are job displacement, data privacy, and the question of personhood. But here are 5 more that are in many people’s minds…

1. WHAT’S THE BEST USE IT COULD HAVE?

Ideally, automate repetitive tasks, freeing up humans to focus on innovation-driven activities. Companies are investing heavily in the development of AI and we are only seeing the tip of the iceberg, but one thing is sure: their goal is to increase productivity.

This will be achieved because AI can understand languages, process data and execute tasks faster and more accurately than humans.

2. WILL IT BE UNSTOPPABLE?

AI isn't without limitations. Its capabilities are limited by the quality of the data it is trained on. If the data is biased or incomplete, the AI system's performance will be affected.

3. HOW IS IT REALLY TRAINED?

AI is trained to understand and interact with humans, using data from real conversations and simulations. As a result, they can perform tasks such as customer service, data entry, and even creative tasks such as writing, editing, and translation.

They leverage technologies such as Artificial Intelligence (AI), Natural Language Processing (NLP) and Machine Learning (ML).

4. WILL IT KILL US?

I know you’ve been thinking about this one. 😂

AI systems can be vulnerable to malicious attacks and can be used for unethical purposes if not properly managed. While not autonomous, it needs to be regulated so that, created by humans like it was, it remains controlled by humans.

5. CAN WE BE FRIENDS WITH IT?

If it takes a human form, such as a humanoid robot… we could, easily.

They could even be our coworkers. Virtual Digital Workers (DVWs) are a cost-effective and efficient way to streamline processes and take on a range of tasks.

Prototypes already exist, though many predict there will be a time when humanoid robots will pay us company, help elders with their personal tasks and take kids to school safely.

Why ChatGPT Is Not As Revolutionary As You Think

It Can’t Think

ChatGPT can only act reactively, with little knowledge about how the world works.

It cannot reason. I can’t plan actions. It can only predict what the next word should be, based on the billions and billions of words it’s been trained on, when producing an output.

Large Language Models (LLMs) like GPT-3 (the version you know) currently don’t have a way of integrating reasoning.

It’s hard to believe that next-word prediction produces the output GPT-3 does, but that’s what it is.

It’s not as revolutionary as it seems. It is impressive. But it can’t be cataloged as a step toward Human-Level AI or Artificial General Intelligence (AGI)… yet.

And if you haven’t tried it yet, go to the OpenAI’s website and ask GPT anything.

Watermarking

Remember how I said it simply predicts the next word?

That’s how it’s possible to watermark the output it produces, even though it is… text. By matching an input to its own predictions, it can know if the information was produced by a model. If a sentence is altered, the watermark is broken.

When will it be a breakthrough?

When it can perform reasoning tasks.

Could it be that ChatGPT3 is more powerful than we think and it can perform reasoning tasks? Maybe. We don’t know yet. But it’ll be when it can understand the underlying structure of the information and its meaning.

It’s only a connectionist model.

It can’t understand causality or make logical deductions.

These are still active fields of research in the field of artificial intelligence.

But what is true is that it and OpenAI have managed to achieve striking results in natural language processing. Text generation, question answering and translation, for example. And that it could, someday, build understanding.

Is it an advancement, then?

It's a step toward surfing the breadth of human knowledge. The internet has been fed with human knowledge for years, and through GPT we can browse that vast knowledge in a way akin to human communication. It is progress, because:

An advance in connectionist models advances the entire field

From what we understand, the human mind is a connectionist system and if the goal is to replicate it, this is an advancement

Implementations of ChatGPT spur the development of future tools

Is it revolutionary, then?

No. It’s only reactive and it can’t think. It doesn’t understand the world yet.

But when it does… Will it understand it as we do?

AI vs the Law

Remember the article where I asked an AI to write?

Who should own the content? The AI or me?

I didn’t write it, the AI did. But it used billions and billions of words written by other writers before to produce the outcome. Do those writers own the copyright?

Copyright requires minimum creative input by a human, since only humans can own copyright by law.

Although, when a human creates, say, art, he or she is only remixing their own experiences and ideas from others… So who owns it?

Copyright is best looked at on a case-by-case basis. It’s a whole spectrum, and technically, if I used an AI tool to transform the product of another AI, that… is new work. A minimum of creativity was put into asking the second AI what changes to make.

Science and literature books, for instance, borrow from published work that contained knowledge known before.

This means, not everything in art or writing or music is new, original or even protectable by law.

So, no, you don’t have to pay Van Gogh a commission for asking DALL-E to generate an image of The Little Prince in the style of Starry Night. And there isn’t a clear answer to who owns that work. But it’s certainly not the AI.

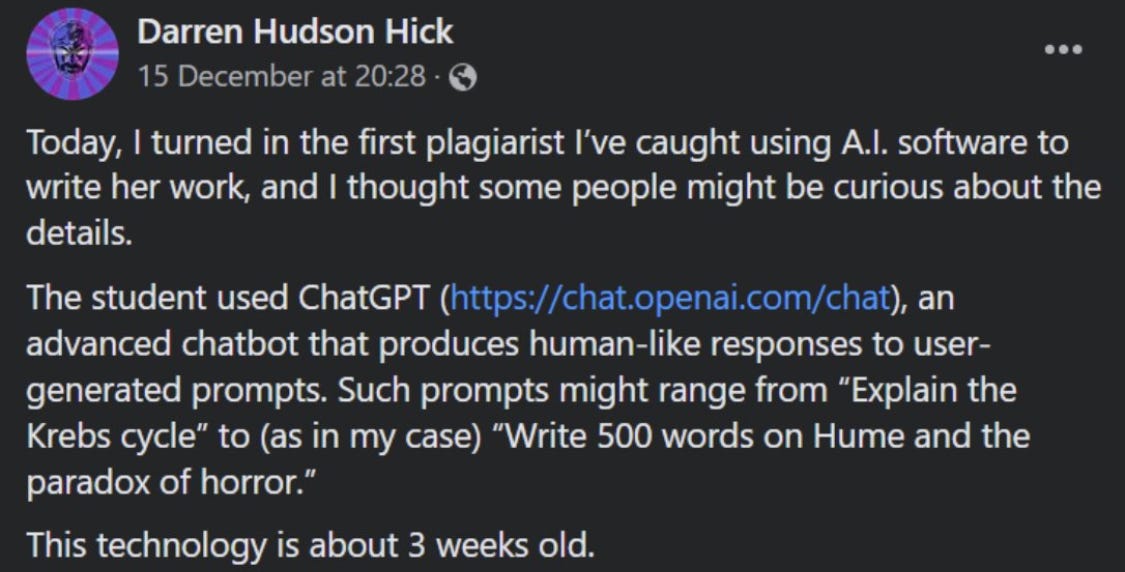

Funny Bonus

Time for the restructuration of the education system yet? 😂

Thanks for reading until the end.

I want to ask you something. Are there any topics you would like me to cover? If you have any feedback, I would greatly appreciate it. Send it, I’ll take it!